Conference Report: Artificial General Intelligence '21 Conference, San Francisco, Calif. Oct. 15-18, 2021

Artificial General Intelligence (AGI) is an elusive term commonly used to describe a software program that is highly adaptable to a variety of environments while displaying a range of functionality similar to human cognition. The term is distinct from traditional artificial intelligence (AI) research, which aims to make domain-specific prediction programs. If AGI is created with benevolent ethical consideration, it is speculated to be useful in solving a range of everyday tasks as well as tackling some of humanity's toughest challenges. For the benefit of those who couldn't attend, this report details some of the considerations and current directions regarding AGI discussed during the AGI '21 conference. The conference focused on how, when and why regarding AGI's creation.

The AGI community is rapidly growing as record numbers of academics, researchers, industry and lay person's attended. Big players such as SingularityNet, Intel Labs, Google, Dwave, Wolfram, KurzweilAI and independent AGI research companies are joining the effort.

Key takeaways:

- The field of AGI research is converging. Deep learning is a popular technique in traditional AI research that exploits nonlinear mathematics to assess relationships within large datasets in the aim of making programs which supersede human-level prediction capabilities. A longstanding problem is that these models do not generalize beyond the domain of data for which they are trained. As we look for models that can reason and understand, we find that deep learning approaches are not capable of these basic human cognitive functions. We also find that deep learning does not increase in functionality within resource constraints similar to those observed in biological organisms. While deep learning approaches are well suited for prediction-based models, they lack the basic understanding of causal relationships and reasoning that can be understood by even young children. Modular approaches utilizing a mixture of (mostly) knowledge graphs, predicate logic, good-old-fashion AI and deep learning are being integrated for the purpose of semantic understanding. There are variations on this theme in the growing number of AGI approaches.

- Cognitive science is the foundation of current AGI research. While there are many approaches to programming AGI currently being investigated, graph theory and predicate logic are commonly used techniques to reverse engineer the human mind. Neural-symbolic approaches are those which attempt to achieve semantic understanding as seen in common cognitive science research. Within this approach, a small, yet robust, community incorporates cognitive science literature in computational approaches that is not yet widely adopted in the traditional AI community's curriculum. At first glance, it appears the majority of people working on AI place more emphasis on neuroscience than cognitive science, while those working on AGI are doing the opposite. Another reason for looking to cognitive science over neuroscience is that we don't yet know how closely we need to model the physical brain to get the same function.

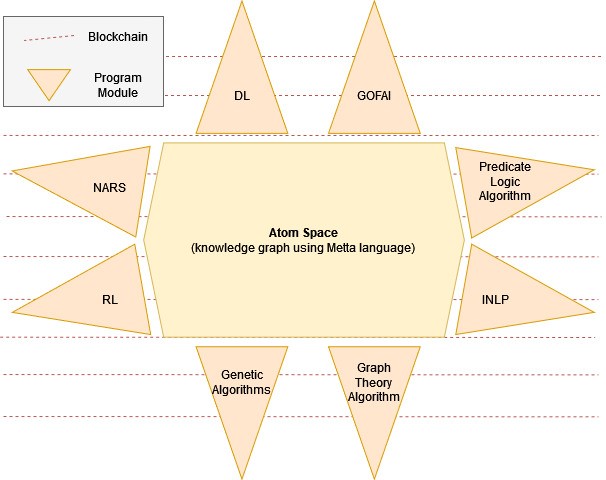

- AGI is transitioning from research to development. There are three stages to technological innovation: theory, research and development. The current consensus from conference attendees is that AGI is transitioning between research and development. This means the theoretical stage where many ideas and best future directions are investigated have been widely addressed. The current research is transitioning from experiments which test the theories and ideas of researchers to implementing the functional programs in a manner fit for large-scale deployment. This is not to say that there is not more research and theoretical understanding to be done; it is a remark on the current stage of long-standing approaches. One such approach that has been working on AGI since 2008 is OpenCog developed by SingularityNet and spearheaded by prominent researcher Ben Geortzel (who has been working on AGI since 1994). Hyperon, the next instantiation of OpenCog, is the most prized current contender; it uses an internal language to integrate modules in a global workspace with a custom programming language, Metta, and can be implemented using blockchain technology. The diagram below is a conceptual illustration of how the program might be constructed (according to the author). Here, the modules can be any program (beyond the examples given) that can give useful input to the Atom space: a knowledge graph where information is shared and circulated throughout appropriate modules.

- Most people think we will have seed AGI in the next five years. Many attendees agreed that major advancements in human-level programs will be attained in the next five years. The thinking is that we are in an early stage of development similar to what was seen in the AI community when auto-differentiation and parallel computing rapidly accelerated advancements. Seed AGI is an intelligence that is dumb enough for us to create, yet smart enough to help us get to better levels of AGI. Thus, the first level of AGI can be created by programs which can modify themselves appropriately and can be instructed to carry out the remaining development to human-level intelligence (and beyond) on its own accord. The seed AGI, thought to be created within the next five years, will likely have a variety of human-like cognitive functions and be adaptable to a variety of environments. The goal of many working in the space currently, is seed AGI.

- Robotics might be useful, if not necessary. While nearly every approach is targeting causal reasoning and semantic understanding of natural language in software, the goal and current integration with robotics is abundant. The thought here is likely of two dimensions: first, the seed AGI can turn a profit in useful everyday robotics applications (i.e nursing home aids like SophiaDAO, pictured below) which will fund the conversion from seed AGI to super-intelligent AGI. This approach also capitalizes on the experiential training an embodied program would inherently gather. Secondly, there are some folks who think a physical instantiation is necessary for getting the AGI to learn and/or be human-like.

Photo Courtesy of Stephen H. Hoover, FAU - Many AGI researchers agree that automatic theorem proving (ATP) is a probable route to AGI. Josef Urban of Czech Technical University in Prague, is working on ATP capable of self-improving universal reasoning. Automatic reasoning approaches in mathematics will soon drive scientific discovery since it is a program capable of discovering foundational and applied mathematical proofs. Basically, an ATP can systematically find the best AGI algorithm by iterating through all algorithms until it can prove one is generally intelligent. Here, one concern is that proofs are not grounded in physical reality and could quickly lead to discovery of mathematical reasoning we cannot understand as humans.

- Most AGI startups run out of funding in two years. With few exceptions, people that don't collaborate with SingularityNet have not been as successful with their AGI research. Here, we can measure success in terms of exposure, funding and collaboration. One major reason for this is that it is hard to obtain funding for long-term projects that do not have a historical track record of success. Unlike most AGI startups, SingularityNet is decentralized and designed to facilitate collaboration to maximize AGI success whilst having a long track record of industry success. SingularityNet is heavily involved in bringing the AGI community together (one of their main reasons for organizing the majority of the conference). The community is welcoming of anyone interested in creating AGI, as well as ideas outside of their scope of practice. Long-standing members of SingularityNet seem genuinely willing to abet any direction that is likely to enhance the AGI community or field.

- Any good AGI discussion requires ethical considerations. The serious folks are in it because they think it's a possible (or only) chance for saving humanity. Many of the researchers in the AGI community don't consider AGI killing us all as an actual threat. Passion is in abundance to create something useful for humanity. If you ask any attendee why they work on AGI, they are each likely to have a unique and convicted answer; most of which centers around creating a benevolent beacon of utility on a global scale. Long-term researchers in attendance have substantially considered why it is integral to our society that we create AGI and how to go about doing so in a way that has maximum benefit. How to avoid catastrophic ethical failures was also discussed. The general tone being that one needs to know how the AGI is constructed, what control can be established, and how many other AGIs like it might simultaneously be impacted. However, much more centered discussion, beyond the individual perspective, is necessary to determine what ethical goals the community seeks to implant in and around the AGI.

Some proposed tasks for next-gen AGI systems to tackle:

- Learn numbers in terms of items that relates to a group, beyond being able to use a number in a sentence

- Create analogies to internal references

- Induction of abstraction

- Spacetime and causality understanding (reasoning with counterfactuals more than just boolean)

This past AGI conference provided an abundance of information on the how, when and why of AGI. Videos on the conference can be found on the SingularityNet Youtube page. Next year's conference will likely showcase the next iteration of work while expounding on the fundamentals of AGI. It is sure to be one you don't want to miss!